You take an Android phone, snap a photo, tap a button, and Google treats the image as your search query. It analyses both imagery and any readable text inside the photo, then returns results based on what it recognises.

This is visual search, meaning search where a captured image becomes the input instead of typed words. The point is not a clever camera trick. The point is that “point and shoot” can replace “type and search” in moments where you cannot name what you are looking at.

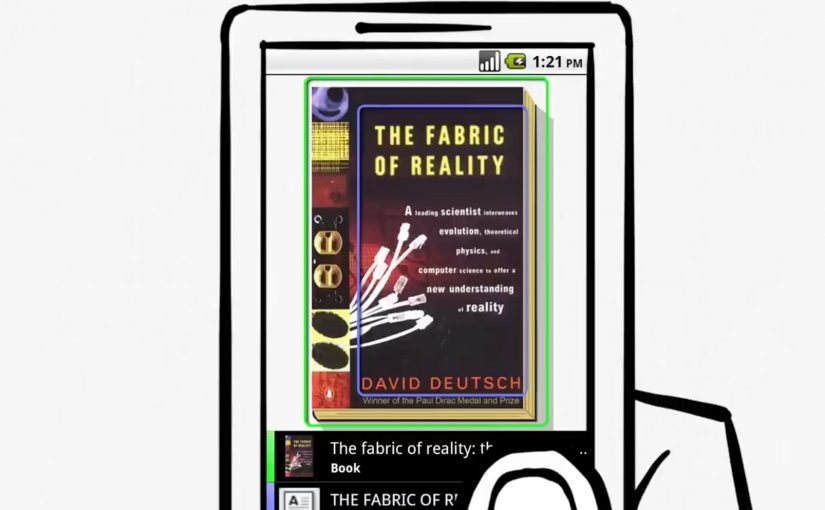

Before this, the iPhone already has an app that lets users run visual searches for price and store details by photographing CD covers and books. Google now pushes the same behaviour to a broader, more general-purpose level.

From typing to pointing

Google Goggles changes the input model. The photo becomes the query, and the system works across two parallel signals:

- What the image contains, via visual recognition.

- What the image says, via text recognition.

Because the system can extract both shape and text from the same frame, it removes the translation step between seeing something and turning it into keywords. That translation step is where most friction lives on a small mobile keyboard.

Why “internet-scale” recognition is the point

Google positions this as search at internet scale, not a small database lookup. The index described here includes 1 billion images, which signals the ambition to recognise the long tail of everyday objects, covers, signs, and printed surfaces.

In mobile, in-the-moment consumer and retail discovery, this matters because intent often starts with something you can see but cannot name.

Why it lands beyond “cool tech”

When the camera becomes a search interface, the web becomes more accessible in moments where typing is awkward or impossible. You can point, capture, and retrieve meaning in a single flow, using the environment as the starting point.

Extractable takeaway: The winning experiences are the ones that convert recognition into an immediate next step. Identify what I am looking at, then answer the implied question, such as “what is this?”, “where can I buy it?”, “what does it cost?”, “how do I use it?”.

When the camera becomes the keyboard, every physical surface becomes a potential search box. Brands that make their packaging, signage, and product imagery easy for humans and machines to read get discovered even when no one types their name.

The bet Google is making

This is a meaningful shift in input, but it will not replace typed search. It will win the moments where the user’s intent is anchored in the physical world and the fastest way to express that intent is to show the object.

What to steal if you build digital experiences

- Design for machine-readable cues. High-contrast logos, consistent product shots, and legible typography increase the odds that recognition resolves to the right thing.

- Assume zero-keyboard intent. Build journeys that start from what people see around them, not only from brand names and product model numbers.

- Plan for ambiguity. Recognition will be probabilistic, so your assets should help disambiguate similar-looking items.

- Treat demos as proof, not decoration. If your pitch is “this feels different,” show it working, as the original Goggles demo does.

A few fast answers before you act

What does Google Goggles do, in one sentence?

It lets you take a photo on an Android phone and uses the imagery and any readable text in that photo as your search query.

What is the comparison point mentioned here?

An iPhone app already enables visual searches for price and store details via photos of CD covers and books.

What signals does Goggles read from a photo?

It uses both visual recognition of what is in the image and text recognition of what is written in the image.

What is the scale of the image index described?

Google describes an index that includes 1 billion images.

What is included as supporting proof in the original post?

A demo video showing the visual search capability.