An owner’s manual you point at the car

To make life easier for car owners, Hyundai has built an augmented reality app called the Virtual Guide. It allows Hyundai owners to use their smart phones to get more familiar with their car and learn how to perform basic maintenance without delving into a hundred page owner’s manual.

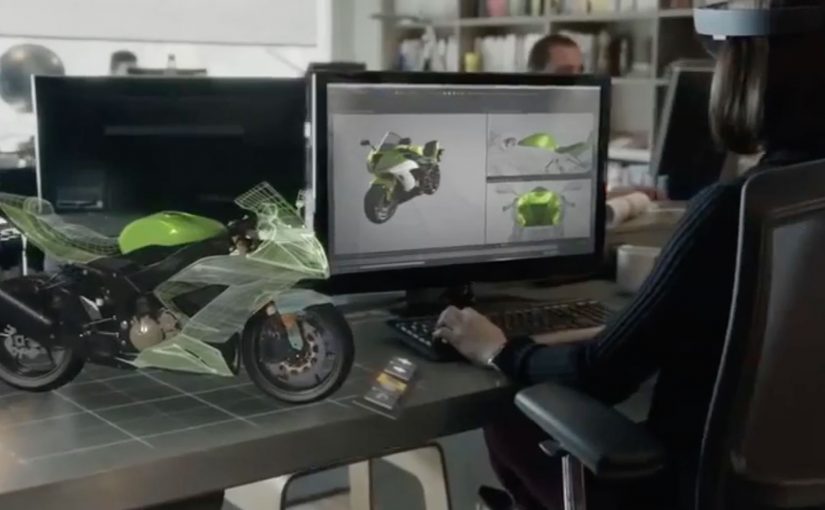

Here, augmented reality means on-screen overlays that label real-world parts and show step by step guidance while you view the car through the phone camera.

Here is a short demo video of the app from The Verge at CES 2016.

The clever part: help appears exactly where you need it

Instead of searching through pages, you point your phone at the car and learn in-context. That one shift. From reading about a feature to seeing guidance on the actual part. Makes learning faster and less frustrating.

In consumer product and mobility brands, the highest-value help shows up at the moment of use, not in a document you have to hunt for.

The real question is whether your product help meets people where the problem happens, or sends them off to search.

In-context, camera-based guidance should be the default for “how do I” tasks. Manuals should be the fallback.

Why this is a big deal for everyday ownership

Most drivers do not ignore manuals because they do not care. They ignore them because the effort is too high at the moment they need help. AR lowers that effort by turning “How do I…?” into a quick visual answer while you are standing next to the car.

Extractable takeaway: If you can put guidance on the real object in front of someone, you remove the search step. That makes follow-through more likely.

What Hyundai is really building here

Fewer support moments, fewer avoidable service misunderstandings, and a smoother owner experience that strengthens trust in the brand long after purchase.

The Virtual Guide app will be available in the next month or two for the 2015 and the 2016 Hyundai Sonata and will come to the rest of the Hyundai range later on this year.

Patterns to borrow for product help

- Move instruction from documentation into the environment. In-context guidance beats search.

- Design for the real moment of need. Standing next to the product, phone in hand.

- Make “basic maintenance” feel doable. Confidence is a retention lever.

A few fast answers before you act

What is Hyundai Virtual Guide?

An augmented reality app that helps Hyundai owners learn car features and perform basic maintenance using a smartphone instead of relying on the printed owner’s manual.

How does it work in practice?

You use your phone to view parts of the car and get guidance designed to help you understand features and maintenance steps in context.

Which models does the post say it supports first?

The post says it will be available first for the 2015 and 2016 Hyundai Sonata, then expand across the Hyundai range later in the year.

Where was the demo shown?

The post references a demo video from The Verge at CES 2016.