From “pick 20 tools” to “run a working stack”

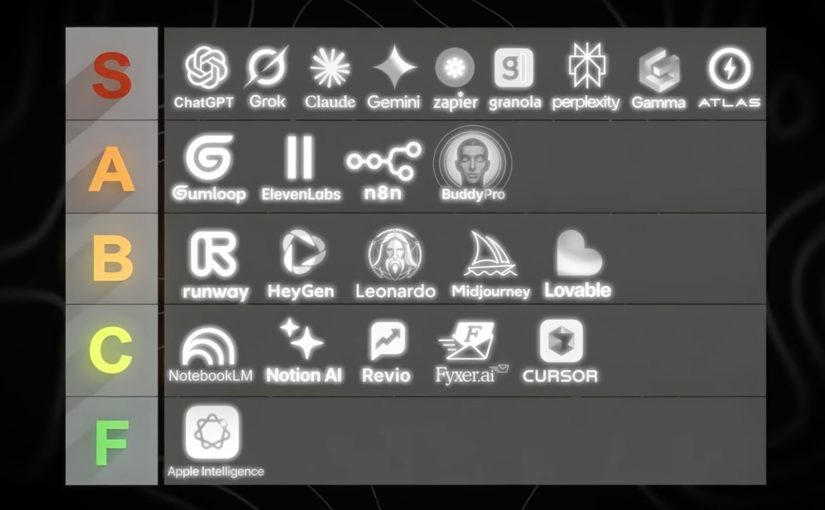

I recently came across the below video from Dan Martell which frames “zero-code million-dollar business” as a tool-selection problem. That framing is useful. However the right conclusion for marketers and brands watching is not “go pick 20 tools”. The right conclusion is “stop shopping. Start stacking”. In 2026, you should start focusing more on the ability to pick, connect, and operationalize capabilities.

By “working AI tech stack” I mean a small, repeatable set of tools that moves work from input to output with the least friction. It is not a folder of bookmarks. It is a production line.

The useful takeaway isn’t the list. It’s the operating model.

Most people consume AI content and walk away with a shopping list. That is the wrong takeaway. The useful takeaway is operational. Arrange capabilities into a workflow that consistently produces outputs. Briefs, assets, approvals, launches, responses, and measurable improvements.

A list creates options. A stack creates throughput. Throughput is how reliably your team converts intent into shipped work, week after week, without rebuilding the process every time.

The mechanism: a stack is just clean handoffs

A working AI tech stack is a sequence with explicit handoffs:

Inputs → Synthesis → Creation → Automation → Distribution → Measurement

Each step has one job. Each step produces an artifact someone else can use. Each handoff is defined so the work does not stall in Slack, email, or “waiting for approval”.

In global FMCG and retail marketing organizations, the bottleneck is rarely ideas but the handoffs between people, tools, and approvals.

Why this lands with leaders

Tool lists feel like progress because they are concrete and low-commitment. You can bookmark them and feel “covered”. Stacks feel harder because they force decisions: what is the workflow, who owns each step, where do we enforce quality and risk controls.

Extractable takeaway: If you cannot name the exact step a tool owns in a repeatable workflow (input → transformation → handoff → output), it is not part of your stack yet. It is just potential.

The business intent: less software. More shipped outcomes

For marketers and brands, the goal is not “using AI”. The goal is operational leverage:

The real question is not how many AI tools you can name, but whether your team can move work through a repeatable line with clear ownership and handoffs.

- Faster cycle time from brief to asset.

- Fewer revision loops because synthesis and constraints are done upfront.

- Fewer dropped balls because handoffs are automated.

- More reuse of institutional knowledge because answers are captured once and searchable.

- Higher output without lowering standards.

This is also where governance belongs. A stack needs rules about what data can go where, who can approve what, and which steps require a human decision.

The working stack blueprint: tools mapped from Inputs to Measurement

Below are the 20 tools referenced in the video, placed where they most naturally fit in the production line. You can use fewer than 20. The point is the flow.

Inputs: capture raw material without losing signal

Manus

Manus is designed to act more like a task runner than a chatbot. You give it a goal and it works through steps to deliver outputs, not just advice. Example: collect competitor screenshots, extract claims, summarize patterns, and deliver a brief plus a slide outline.

SocialSweep

SocialSweep is positioned as a way to search your network and relationship graph with context. It helps you identify who you know, why they are relevant, and what to say. Example: find warm paths to retail media decision-makers, then draft an intro message that references shared context.

HireAlli

HireAlli is positioned around capturing commercial intent from website traffic so teams can follow up faster. Example: flag repeat visits to pricing pages, then route the lead to sales with a summary of pages viewed and a recommended next message.

Synthesis: turn messy inputs into a usable brief and plan

NotebookLM

NotebookLM is useful when you want answers grounded in the sources you provide. It helps you summarize, compare, and extract structure from documents. Example: upload research PDFs and prior campaign docs, then generate a launch FAQ and a messaging hierarchy that stays consistent with those materials.

Claude

Claude is a general assistant that excels at drafting, rewriting, and structuring thinking. Use it to turn raw notes into clear decisions and action plans. Example: paste a workshop transcript and request a decision log, assumptions, risks, and a one-page brief for stakeholders.

ChatGPT

ChatGPT is a general-purpose assistant for ideation, drafting, analysis, and reusable workflows. It is especially useful when you iterate toward a spec. Example: ask clarifying questions for a campaign brief, then output a structured creative and media spec the team can execute.

Creation: produce assets that are actually shippable

Gamma

Gamma helps turn rough thinking into a structured deck or document quickly. It is strong when the bottleneck is narrative structure, not visual polish. Example: paste the brief, generate a 10-slide storyline, then refine the argument and flow before design.

Descript

Descript lets you edit audio and video through text. You edit the transcript like a document and the media follows. Example: clean up a leadership video by removing filler words, tightening sections, and exporting both a long version and short clips.

ElevenLabs

ElevenLabs generates natural-sounding speech from text and supports scalable voice workflows. It is useful for narration, localization, and voiceovers. Example: create a consistent “brand voice” narration for product explainers, then generate localized voiceovers without re-recording.

Lovable

Lovable is positioned as an AI-assisted way to build apps or web experiences without traditional engineering. Think prototypes, internal tools, and simple customer experiences. Example: describe an internal campaign intake tool, generate a prototype, then iterate requirements until it is usable.

Automation: make the handoffs run without nagging humans

Make

Make connects apps into workflows using triggers and actions. It is the plumbing that turns “good tools” into “a working line”. Example: when a brief is approved, create tasks, notify stakeholders, generate a first draft, and route it to review automatically.

ChatAid

ChatAid is positioned as an AI support layer that can answer recurring questions and route issues. It fits both internal enablement and customer-facing support when designed with escalation rules. Example: answer “where is the latest asset” or “what is the policy”, and escalate to a human when confidence is low.

Distribution: move outputs into channels that drive outcomes

Revio

Revio is positioned around managing inbound conversations across social channels in one place. It helps teams respond consistently and not miss high-intent messages. Example: unify DMs so customer questions and sales inquiries do not get lost across platforms.

YourAtlas

YourAtlas is positioned around AI agents that can handle inbound qualification and booking. This matters in service businesses and lead-driven funnels. Example: handle inbound calls or requests 24/7, capture required details, then hand off qualified appointments to humans.

Membership.io

Membership.io supports structured memberships and gated content experiences. It is a distribution layer for expertise and ongoing value, not just content hosting. Example: package a learning path for partners or teams, with searchable resources and a community layer to reduce repeated questions.

BuddyPro

BuddyPro is positioned around turning your content and methods into an always-on assistant people can query. It is a distribution mechanism for expertise at scale. Example: clients query your “playbook assistant” for next steps between calls, and you control what it can and cannot answer.

Measurement: close the loop so the stack improves every cycle

Hiro Finance

Hiro Finance is positioned around cash-flow visibility and planning. It helps decision-makers see financial reality without spreadsheet archaeology. Example: run a weekly check on runway, recurring costs, and upcoming risk points before you scale spend.

HelloFrank

HelloFrank is positioned around deeper business-context finance insights. It can help detect spend anomalies and surface what changed month-over-month. Example: find subscription creep and cost spikes, then turn it into a prioritized cleanup plan.

Revaly

Revaly is positioned around payment performance and reducing failed transactions that create involuntary churn. It matters most where recurring revenue is sensitive to declines. Example: identify where legitimate payments fail and improve recovery rates without harming customer trust.

Precision

Precision is positioned around turning KPIs into a practical operating rhythm. It helps teams focus attention on what moved and what to do next. Example: generate a weekly performance brief. These metrics shifted, here are likely drivers, here is what we should test or fix this week.

How to build a working stack without buying 20 subscriptions

- Start with one workflow you ship weekly. Brief → assets → approvals → publish → measure.

- Assign ownership per step. Tools without owners become clutter.

- Build the handoffs before you add more tools. Automation is what turns tools into a line.

- Define where humans must decide. Brand-sensitive, compliance-sensitive, and customer-sensitive steps need a review point.

- Run a monthly keep-or-kill review. If a tool is not improving cycle time or quality, remove it.

A few fast answers before you act

What is the single biggest mistake teams make with AI tools right now?

They treat AI as a chat window to copy and paste from, instead of an execution layer connected to a workflow that ships outputs.

What is a “working AI tech stack” in one sentence?

A working AI tech stack is a small set of connected tools that reliably turns inputs like notes and briefs into shippable outputs, with minimal friction and clear handoffs.

How do I decide if a tool belongs in my stack?

If you cannot name the exact step it owns and the handoff it triggers, it is not part of the stack yet.

What should a marketing leader implement first?

One throughput line, end to end. Inputs → Synthesis → Creation → Automation → Distribution → Measurement. Then automate handoffs before adding new tools.

How do I avoid tool sprawl?

Set constraints: one tool per job, a clear owner, and a monthly keep-or-kill review tied to measured outcomes.