Microsoft brings holograms into the real world

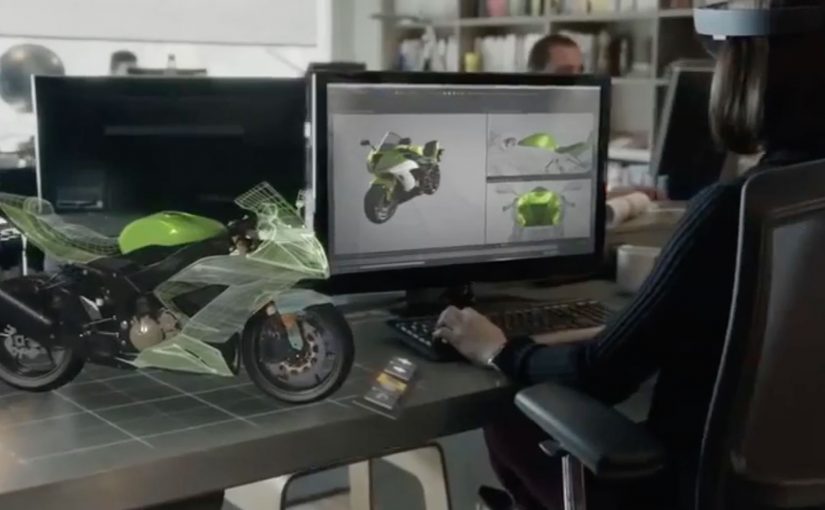

At Microsoft’s Windows 10 event, the company unveils a new augmented reality experience for the platform called HoloLens.

Using a special holographic headset, Windows 10 users can make holograms appear in real life. Not on a screen. In the room, anchored to space.

This is the kind of step-change that reframes computing from something you look at to something you live inside.

Watch below how Microsoft demonstrates holograms as spatial interfaces, not screen content.

What makes HoloLens different

HoloLens is positioned as an untethered augmented reality experience, built to feel like a real device rather than a lab prototype.

The device is said to use:

- See-through lenses

- Spatial sound

- Advanced sensors

- A dedicated holographic processing unit

Together, these elements aim to deliver a state-of-the-art mixed reality experience without cables or external trackers.

In this context, augmented reality means digital objects are layered into the real world through see-through optics, not a fully immersive virtual environment.

Why this matters

HoloLens signals a shift in interface design. Instead of dragging windows around a flat screen, digital objects become part of physical space. Apps turn into holograms. Workflows become spatial. Interaction becomes more natural because it maps to how people already understand the world.

In global digital product and marketing teams, the significance is not just the headset. It is the move from screen-first design to space-first interaction.

Extractable takeaway: HoloLens is important because it presents AR not as a feature inside existing software, but as a new computing layer where interface, content, and context are all anchored to physical space.

What to steal from this launch

The real question is not whether holograms look futuristic. It is whether a new interface model changes behavior in a way people can feel immediately.

That is what this launch gets right. It demonstrates the shift through experience, not just specification. The message is simple: when a technology changes where interaction happens, it also changes how products should be designed.

- Lead with the interaction shift, not the feature list. Show what changes in the user’s behavior before explaining the underlying technology.

- Make the benefit visible in context. Demonstrate the experience in a real environment so people immediately understand the practical value.

- Use the demo as proof, not decoration. The strongest launch moments show the product working in the exact conditions users care about.

- Explain the stack after the experience lands. Once the audience feels the change, technical details reinforce credibility instead of creating friction.

- Design for the new interface model. If interaction moves from screens to space, content, UI, and workflows must be rethought for that environment.

A few fast answers before you act

Is HoloLens virtual reality?

No. It is augmented reality using see-through lenses that overlay holograms onto the real world.

What is the key technical promise?

Untethered, spatially aware holograms powered by sensors, spatial sound, and a dedicated holographic processing unit.

Why is being untethered important?

Untethered hardware makes the experience feel like a real computing device instead of a lab setup, which lowers friction for everyday use and demonstration.

What changes when apps become spatial?

The interface moves off the screen and into physical space, which changes how people place, view, and interact with digital content while moving through the real world.

What makes this feel like a new computing layer?

The shift is not only visual. It combines sensing, sound, and spatial anchoring so digital objects behave as if they belong in the room, not just on a display.