Someone holds up a Cadbury chocolate bar, opens the Spots v Stripes game, and the packaging itself becomes the trigger. The camera recognises the pack, the AR layer kicks in, and the game starts. The mechanic is simple. Smack the ducks as fast as you can. Then share your best score socially.

The real question is whether on-pack augmented reality can turn packaging into a repeatable acquisition channel without adding friction that kills participation.

Packaging-triggered AR is worth shipping only when the first-use path is near-zero effort and recognition works reliably in the wild.

How Spots v Stripes starts

Cadbury launches an augmented reality gaming app activated via its chocolate bar packaging. The experience is called Spots v Stripes and is described as using Blippar’s image recognition technology and AR platform to recognise the pack and unlock the game.

Here, an “activation” simply means a brand experience that is unlocked by a concrete trigger, in this case the pack in front of the camera.

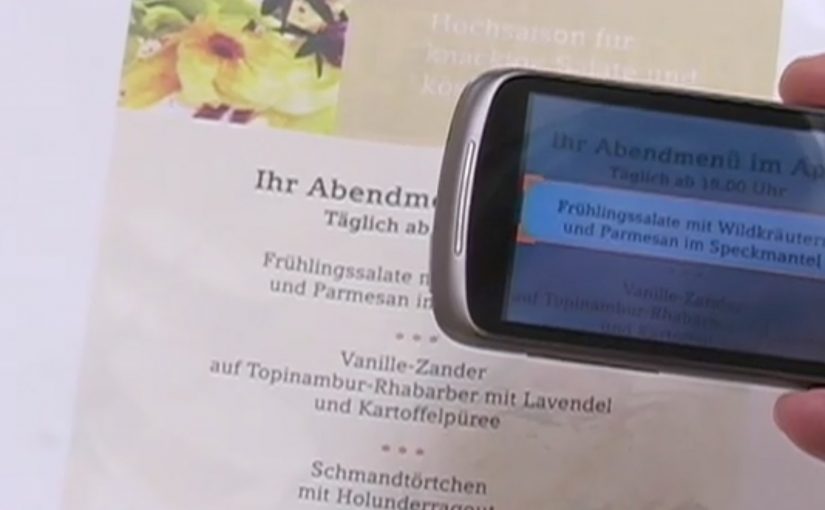

Image recognition is doing one job: matching what the camera sees to a known pack design so the app can load the right AR content.

The pack is the media unit

This is the part that matters. The “media” is not a poster or a banner. The media is the object people already hold in their hands.

Because the pack is already distributed, every bar on a shelf becomes a repeatable call-to-action surface, not just a container.

A lightweight game loop is a feature

The gameplay is described in one sentence. Smack the ducks quickly. Post your score. That simplicity is not a limitation. It is a design choice that fits a packaging-triggered moment.

By “game loop” I mean the short action and feedback cycle that repeats every few seconds and makes the player try again.

When the trigger is the product in hand, a fast score chase works because there is no search step and the payoff is immediate.

In global FMCG teams that need scalable shopper engagement, on-pack AR can convert owned packaging into a repeatable interaction surface at shelf.

Why a pack-triggered game travels

It lands because the pack gives you a reason to try right now, in the exact moment you already have the product. The social score adds light competitive tension, which makes the experience easier to retell and share.

Extractable takeaway: If the physical object is the trigger, design the experience so the first win happens in under 10 seconds. Anything slower belongs on a landing page, not on a pack.

What Cadbury is buying with this mechanic

This pattern aims to capture attention where paid media is weakest: the moment after purchase and before consumption. Done well, it can create a measurable interaction, a shareable score, and a reason for the pack to stay in view longer than a glance.

The trade-off is trust. If recognition fails or onboarding feels fiddly, the pack’s call-to-action can become noise and future packs may get ignored.

What to steal from this AR-on-pack build

If you cannot explain the first 10 seconds in one sentence that fits on packaging, you do not have an on-pack experience yet.

- Friction at first use. Make the first open, recognition, and first interaction feel obvious and rewarding.

- Recognition reliability. Test across lighting, angles, crumples, and partial occlusion. If it fails in a kitchen, it will fail in a store.

- Share mechanics. A score worth sharing needs a clear benchmark, a personal best, or a friends leaderboard, not just “I played it”.

- On-pack instruction. Give one clear nudge that does not compete with the brand block. One verb. One outcome.

- Measurement. Define success as scan rate, first-session completion, repeat play, and share rate. Not downloads alone.

A few fast answers before you act

What is this activation, in one line?

An AR mini-game called Spots v Stripes that starts when the app recognises Cadbury chocolate bar packaging.

What does the user do in the first 10 seconds?

They point the camera at the pack, the game appears, and they start “smacking the ducks” to rack up a score.

What technology is described as enabling it?

Blippar’s image recognition and AR app platform is described as the enabling layer that matches the pack and unlocks the content.

Why is packaging-triggered AR strategically interesting?

It turns every physical pack into an owned entry point to interactivity, without buying extra media inventory to get the first click.

What should you measure to know it worked?

Scan rate per pack exposure, first-session completion, repeat play within a week, and share rate per completed session.

What usually breaks first, and how do you prevent it?

Recognition and onboarding. Prevent it by testing real-world lighting and handling, and by making the first reward happen almost instantly.