Airports are crowded with people from different backgrounds. This Christmas, KLM brings them together with Connecting Seats. Two seats that translate every language in real time, so people with different cultures, world views, and languages can understand each other.

The experience design move

KLM does not try to tell a holiday message. It creates a small, human interaction in a high-friction environment. You sit down. You speak normally. The barrier between strangers is reduced by the seat itself.

By turning translation into the interface, the seat makes the first move feel low-risk, which is why the interaction reads as human rather than branded.

The real question is how you turn a crowded, anonymous moment into a safe reason for two strangers to interact.

In global travel hubs, social friction, not language, is what keeps strangers from talking.

Why this works as a Christmas idea

Christmas campaigns often rely on film and sentiment. This one uses participation. Here, participation means travelers completing the message by talking with a stranger, not passively watching a story. That is a stronger holiday move than another sentimental film. It makes connection visible and gives the brand a role that feels practical rather than promotional.

Extractable takeaway: If you want a brand to stand for connection, design a micro-interaction that reduces first-move risk, and let participants create the meaning.

The pattern to steal

If you want to create brand meaning in public spaces, this is a strong structure:

- Start with tension. Pick a real-world tension people already feel (crowded, anonymous, culturally mixed spaces).

- Add a simple intervention. Introduce a small change that shifts behaviour in the moment.

- Let interaction carry the message. Let the interaction do the work, not a slogan.

A few fast answers before you act

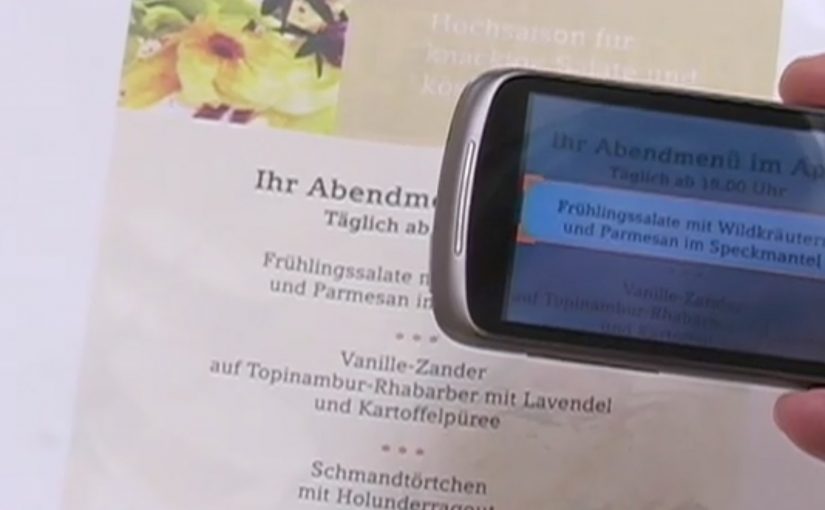

What are KLM Connecting Seats?

Two seats designed to translate language in real time, so strangers can understand each other.

Where does this idea make sense operationally?

In airports and other transient spaces where people from different backgrounds sit near each other but rarely interact.

What is the core brand outcome?

A memorable, lived proof of “bringing people together,” delivered through an experience rather than a claim.

What makes this different from a typical holiday film?

It shifts the message from storytelling to doing. The brand creates the conditions for connection, then travelers complete the meaning through the interaction.

How can a non-airline brand use the same structure?

Find a public setting where strangers share waiting time, introduce a simple prompt that lowers the first-move risk, and let the interaction carry the message.