Manus AI is interesting because it changes the unit of AI adoption in marketing. The useful question is no longer whether AI can write better copy, but whether it can safely execute repeatable marketing work across tools, accounts and output formats.

Vaibhav Sisinty, founder of GrowthSchool, frames the hype in the video, but the useful part is the work pattern: browser shopping, download cleanup, Meta ads analysis, Slack triage, influencer research, prototype building and Telegram-based task handoff.

These are not glamorous use cases. They are the small operational gaps that make marketing teams slower than they should be: extracting data, checking dashboards, comparing options, building lists, scanning messages, formatting outputs and turning loose requests into usable artefacts.

The operating shift: from answer to action

Most marketing teams still use AI as an answer layer. They ask for ideas, summaries, drafts, research angles, prompt variants or campaign copy, and then people still move the work manually through browsers, spreadsheets, CMS workflows, ad platforms, project tools and approval chains.

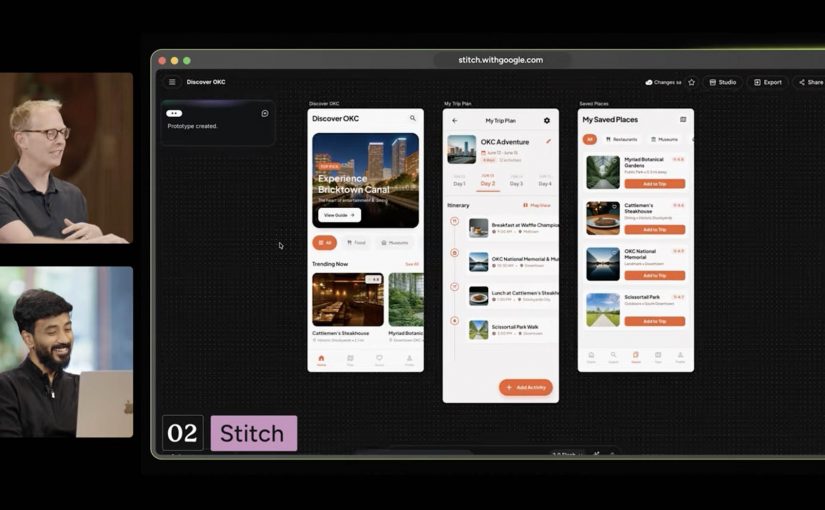

Manus describes itself as an action engine. An action engine is an AI layer that can plan, execute and package work across tools, rather than only generate recommendations.

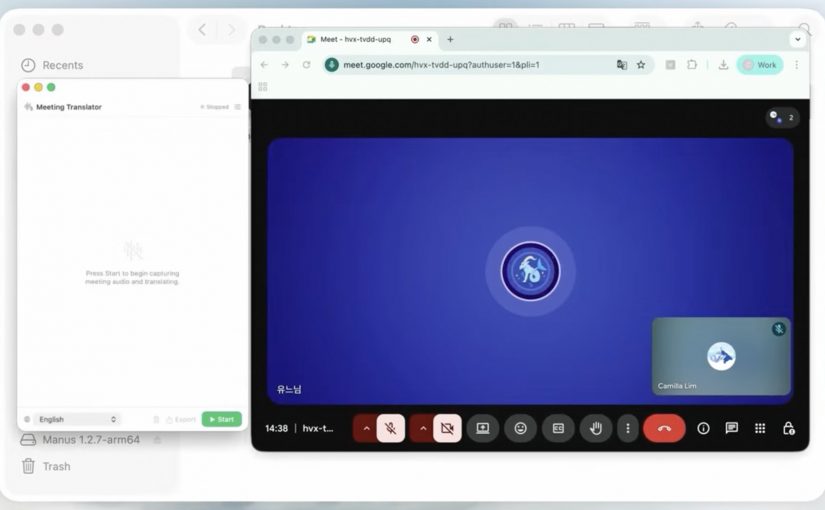

The mechanism is straightforward: Manus combines planning, browser operation, connectors, file access, code generation and output packaging, so a marketing request can move from prompt to finished artefact without being manually rebuilt in five separate tools.

For marketing teams, that puts the pressure point on operating model design, not on prompt novelty.

This mechanism matters because execution creates real business value only when the system can reach the right tools, use the right data, follow the right rules and hand back something a team can trust.

The marketing question: control before scale

The real question is whether a marketing organization can give any agent safe enough access, clear enough tasks and strong enough controls to make the output usable.

The stance here is clear: treat Manus as a workbench for bounded execution, not as a replacement for marketing judgment.

In a real marketing stack, that distinction matters because the work crosses content systems, asset libraries, product data, CRM, analytics, ad platforms, consent, identity and approval workflows.

The Meta angle matters, but not as gossip

Manus still presents itself as part of Meta, while recent reporting says China has blocked the acquisition or ordered the transaction unwound. That tension deserves a brief mention, but it should not dominate the argument.

The business signal is not the takeover drama. It is that the market is moving from AI tools that advise marketers to AI systems that can sit closer to actual work.

That is why the Meta connection is relevant: ads, creators, messaging and business pages are workflow surfaces, not just media surfaces.

If an execution agent can sit near those surfaces, the commercial value is not another content generator. The value is shorter distance between insight, action, packaging and follow-up.

Governance decides whether this scales

An agent that can open browsers, read accounts, analyze campaigns, create files, draft replies and ship prototypes is useful only when access rights, approval steps and logs are explicit. Before scaling it, marketing teams need to define which accounts can be touched, which actions are read-only, which outputs require human approval, which data is excluded and which records prove what happened.

Without this, the failure mode is obvious. The agent becomes another shadow workflow, fast enough to bypass controls and persuasive enough to hide weak evidence.

That is also where adoption gets decided. People will not use an agent because it is magical; they will use it because it removes low-value work without making them responsible for invisible risk.

What marketing teams should operationalize

The practical move is not to connect everything at once. Start with bounded, reversible work: campaign monitoring, reporting summaries, initial lists of potential creators and influencers, content calendars, competitive scans, meeting follow-ups, prototype briefs and internal workflow cleanup. These jobs have enough friction to matter, enough structure to test, and low enough downside if a human reviewer stays in the loop.

Takeaway: Offerings like Manus AI are useful for marketing when they are treated as execution layers for controlled workflows, with clear access rules, human approval points, source checks, output QA and measurable time saved.

A few fast answers before you act

What is Manus AI?

Manus AI is a general-purpose AI agent designed to execute tasks, not just answer prompts. In marketing, that means it can support research, reporting, campaign analysis, workflow automation and prototype creation when access and review are controlled.

How is Manus different from ChatGPT or Claude?

ChatGPT and Claude are usually used as reasoning and drafting interfaces. Manus is positioned closer to an execution environment because it can use browser operation, connectors and output generation to turn a request into a finished artefact.

Should marketing teams connect Manus to real accounts?

Not without data governance and security review. Start with read-only access where possible, confirm what data leaves your environment, exclude sensitive customer or employee data, require human approval before external actions, and keep logs for every workflow that affects campaigns, customers or brand assets.

Does the Meta acquisition story change the marketing argument?

Only slightly. The ownership story is unstable, but the operating lesson is stable: AI agents are moving closer to ads, creators, messaging, commerce and business workflows.

What is the best first use case for Manus in marketing?

Start with recurring analysis and packaging work. Weekly campaign summaries, potential creator and influencer lists, competitor scans and meeting-to-action-plan workflows are easier to govern than live publishing or customer-facing execution.