A real-time AI avatar is a video-based conversational agent that can listen, respond, and show synchronized facial movement during a live interaction.

Runway Characters is not just another image-to-video feature. It points to a bigger shift: interfaces that talk back, maintain expression, and sit inside websites, apps, support journeys and training environments as an interactive layer.

From chatbot box to embodied interface

For years, the consumer web has treated conversation as a text box. Runway Characters pushes the interaction into a more human-shaped format: a visual character with a voice, a defined personality, domain knowledge and live responsiveness.

The enterprise value is not the avatar; it is the controlled interaction layer around the avatar.

A controlled interaction layer is the set of rules, knowledge sources, permissions, actions, escalation paths and measurement signals that determine what the avatar can say and do.

This is why the product is more interesting for operators than for novelty-watchers. A branded face is easy to demo; turning it into a trusted, scalable and measurable service interface is the hard part.

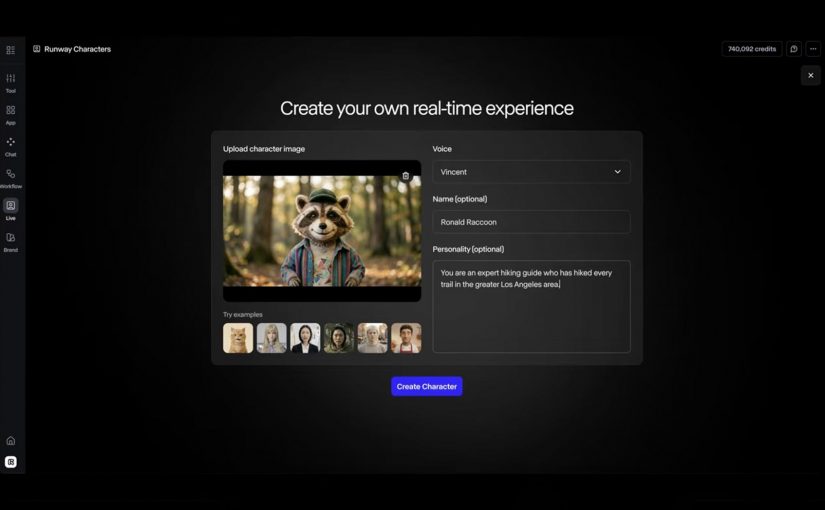

The mechanism: image, voice, knowledge and action

The mechanism is straightforward: a single reference image defines the character, voice and personality shape the interaction, a knowledge base keeps the response inside a domain, and API actions allow the character to do work rather than just talk.

For enterprise teams, this turns the avatar from a creative asset into a governed service surface that sits between consumers, content, data and workflow.

A governed service surface is a customer-facing interface whose content, permissions, actions, analytics and escalation rules are deliberately controlled.

Because the avatar can combine expression, domain knowledge and actions in the same interaction, the experience can move from navigation to guided execution.

That is the commercial hinge. The avatar is not valuable because it smiles; it is valuable when it helps someone finish a task faster, with less confusion and fewer handoffs.

Where Runway Characters could create real utility

The obvious use cases are the ones Runway highlights: tutoring and education, customer support, training simulations, and interactive entertainment or gaming. Those are credible because the value depends on response, patience, expression and repetition.

The stronger enterprise use case is guided commerce and product selection. A character that understands a product range, asks clarifying questions, checks fit, explains trade-offs and hands off to the right next step could reduce decision friction in categories where consumers need guidance.

Brand and marketing experiences are another useful path, but only if they avoid becoming mascot theatre. A brand character should answer, guide, qualify, educate or convert; otherwise it is just a high-cost animation layer with weak business intent.

The real question is not whether the avatar looks impressive; it is whether the interaction reduces effort, shortens a service path, or improves a decision.

The operating model matters more than the character

The failure mode is predictable: teams launch a polished avatar before defining ownership, content governance, privacy boundaries, escalation logic and measurement. That creates a visible interface with unclear accountability.

For consumer experience platforms, the hard work sits behind the face. The avatar needs approved knowledge, consent-aware data access, clear action limits, analytics events, brand controls, QA scripts and a fallback path when confidence is low.

This also changes the content model. Product information, policy content, service scripts and training material need to be structured enough for a live character to use safely, not just published as static pages for humans to browse.

Runway Characters takeaway for enterprise teams

Runway Characters should be evaluated less like a creative tool and more like a new front-end pattern for service, learning, commerce and brand interaction. The adoption question is not “can we make a character?” but “which consumer or employee journey deserves a live conversational interface, and can we govern it?”

Takeaway: Treat real-time AI avatars as governed service surfaces, not animated brand assets. The winning teams will connect character design to knowledge governance, journey ownership, action permissions, measurement and fallback logic before scaling the experience.

A few fast answers before you act

What is Runway AI?

Runway is an AI company building generative media tools and world-simulation research systems. Runway describes its mission as building AI to simulate the world through the merging of art and science.

What is Runway Characters?

Runway Characters is Runway’s real-time avatar product for creating conversational video characters with customizable appearance, voice, personality, knowledge and actions.

Why does it matter for brands?

It matters because it can turn static content, support flows and training material into live guided interactions that feel more natural than a chatbot.

What are the best first use cases?

The best first use cases are narrow, repeatable journeys where guidance reduces effort: product advice, customer support triage, onboarding, training practice and education.

What is the main enterprise risk?

The main enterprise risk is launching a convincing avatar without clear governance over what it knows, what it can say, what it can do and when it must escalate.

How should teams measure success?

Teams should measure task completion, deflection quality, conversion support, time saved, escalation rate, user satisfaction and the cost of maintaining the knowledge base.