TV viewing is overdue for a real change

The TV viewing experience does not change drastically for years. Bigger screens, better resolution, smarter interfaces. But the core behavior stays familiar.

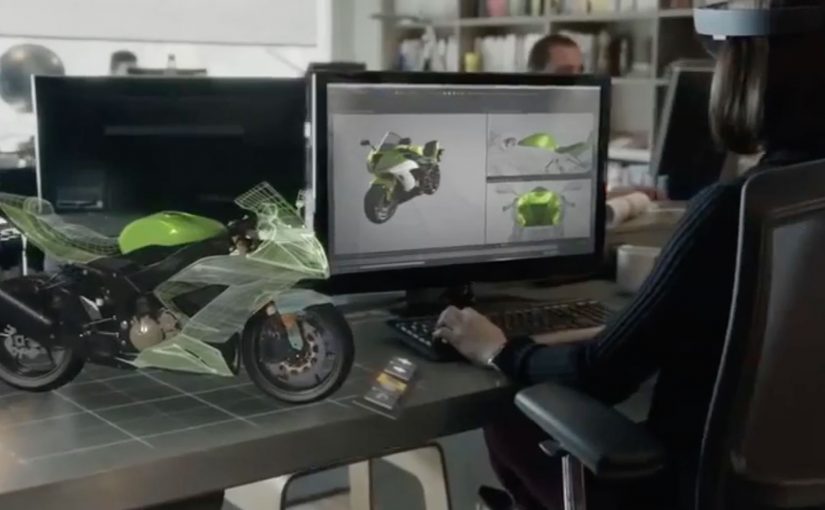

That is why sophisticated headsets like Microsoft HoloLens feel like a genuine breakpoint.

They do not just improve the screen. They change the environment around it.

Microsoft and the NFL re-imagine the Super Bowl

In a recently released video, Microsoft and the NFL re-imagine how a Super Bowl game could be watched with multiple friends and family members.

The scenario pushes beyond passive viewing. It turns the living room into an interactive layer, where the game experience becomes more immersive, more social, and more spatial.

By spatial, I mean the content is anchored to the room, not confined to the TV frame.

This is the kind of concept that makes the future of TV feel tangible.

In mass-market entertainment, the constraint is not what immersive concepts can show, but when consumer hardware becomes affordable, comfortable, and mainstream.

Why this lands for co-viewing

TV should prioritize co-viewing, meaning multiple people watching and reacting together in the same room, because a shared, spatial layer creates viewer control that a single rectangle cannot. The real question is whether you are designing for shared viewer control in the room, or just adding data overlays to a screen.

Extractable takeaway: When you move sports content into the room, design the experience around shared reference points, lightweight interaction, and conversation pacing, not around more screen real estate.

Immersive viewing is real. Consumer timing is not

The video shows how immersive TV watching can get. But Microsoft is not fast-tracking HoloLens for consumer consumption.

For now, only developers can order HoloLens, shipping this year.

No one knows when consumers get access, or when scenarios like this become a reality.

That uncertainty is part of the story. The vision is clear. The rollout timeline is not.

Steal these design cues for living-room sports

- Design for the room. Treat the TV as one surface among many, then anchor the key moments and data where people naturally look and point.

- Make co-viewing explicit. Support multiple viewers and viewpoints, so participation feels shared instead of “one person driving.”

- Prototype for constraints. Assume headsets stay niche for a while, and test what still works when only one person has the device.

A few fast answers before you act

Is this still “TV” or something else?

It starts as TV content, but behaves more like a shared, spatial experience than a single screen.

What is the core shift headsets enable?

They move content off the rectangle and into the room, so viewing becomes environmental and interactive.

What is the biggest constraint right now?

Availability and consumer readiness. Until mainstream hardware adoption happens, this remains concept-led.

What should experience designers take from this?

Design for co-viewing and spatial context. Multiple people, multiple viewpoints, and shared interaction become first-class requirements.

What should you prototype first?

Prototype the simplest “shared moments” layer, so two to four people can compare and discuss the same play without anyone leaving the game flow.