Lynx does something smart and very “of its time.” It takes the messy, awkward first 20 seconds of talking to someone offline, and it turns that moment into a mobile toolkit. Here, “toolkit” means lightweight, in-the-moment utilities you can pull up on your phone to create an opening.

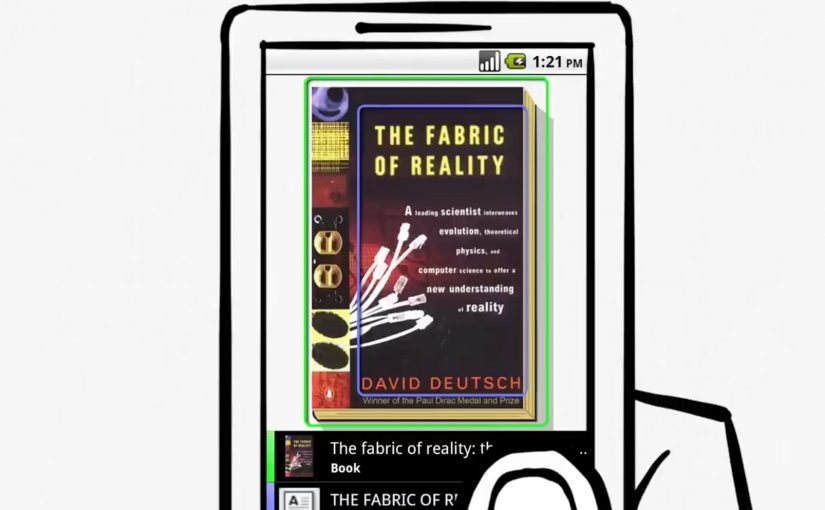

BBH London releases a second round of mobile “pickup tools” for Lynx’s “Get In There” campaign. The promise is simple. Give young guys digital tips, tricks, and small utilities that help them make the leap from online confidence to real-world interaction. The tools are built as icebreakers you can actually use in the moment, not just a brand message you nod at and forget.

The idea, stripped down

Turn “offline dating” anxiety into a set of mobile utilities that create an opening.

What the toolkit looks like

The campaign centers on a suite of mobile experiences backed by video content. Three apps sit at the heart of the set: “Say Cheese,” “Spin The Bottle,” and “Perfect Man Revealed.”

Say Cheese plays with the “take my photo” moment to create a surprise reveal.

Spin The Bottle gamifies group energy and removes the “who do I choose” tension.

Perfect Man Revealed reframes a quiz into a playful personal reveal.

The pattern matters more than the specifics. Each tool is designed to create a socially acceptable reason to start an interaction, then let the person take it from there.

In youth-focused consumer brands, the winning use of mobile is often to reduce in-the-moment social friction, not to replace the interaction.

The real question is whether your digital work helps people take the next awkward step in the real world.

When you want behavior change, utility-first beats message-first.

Why this works as marketing, not just “a funny app”

Most brand campaigns try to persuade with claims. This one tries to equip with utility. By making the icebreaker the mechanic, the brand shows up at the moment of action, which is why it sticks.

Extractable takeaway: When the behavior is awkward, ship a small, optional utility that creates a socially acceptable opening, then get out of the way and let the human interaction do the work.

- It inserts the brand into behavior, not media.

If the tool gets used, the brand is present at the exact moment the customer cares, not ten minutes later in a recall survey. - It makes “digital to physical” a real bridge.

A lot of digital work stops at clicks. Here, the mechanic is literally about translating screen confidence into real-world action. - It scales with video and gets remembered through the gag.

The utility is the hook. The humor is the memory device. Video content becomes the distribution layer that makes a niche behavior hack feel like a mainstream campaign. - It is brand-consistent without being product-heavy.

The “Lynx Effect” idea is not explained. It is implied. The campaign behaves like an accomplice to confidence, which is exactly what the brand wants to stand for.

The deeper point

This is early evidence of a direction many brands move toward. Marketing that ships as tools, not just communications.

Instead of asking for attention, the brand earns a place in real life by being useful in a situation people actually want help with.

Patterns to borrow when you ship tools

- Start with the awkward moment. Pick the one moment people avoid because it feels risky. Then design a tool that reduces the social friction in that moment.

- Make the utility the hero. If the only payoff is “branding,” people drop it. If the payoff is a usable social script, they try it once, and that is often enough to create talk value.

- Design for respect and consent, even when the creative is cheeky. When you play in dating and social dynamics, the difference between playful and creepy is not subtle. Build mechanics that keep choice and comfort with the other person, not tricks that corner them.

A few fast answers before you act

What is Lynx “Get In There” trying to do?

It aims to help guys get offline and start real-world interactions, using tips, tricks, and mobile tools as icebreakers.

What makes these tools different from standard mobile ads?

They are designed to be used in the moment, not just consumed. Utility first, branding second.

Which apps are part of the toolkit?

“Say Cheese,” “Spin The Bottle,” and “Perfect Man Revealed.” Each is designed to create a simple opening for real-world conversation.

What is the reusable marketing lesson?

If you can turn a customer’s friction point into a simple tool that helps them act, you move from awareness to behavior.

What is the main risk with this kind of idea?

If the mechanic crosses into manipulation, it backfires. The tool must stay playful, optional, and respectful.