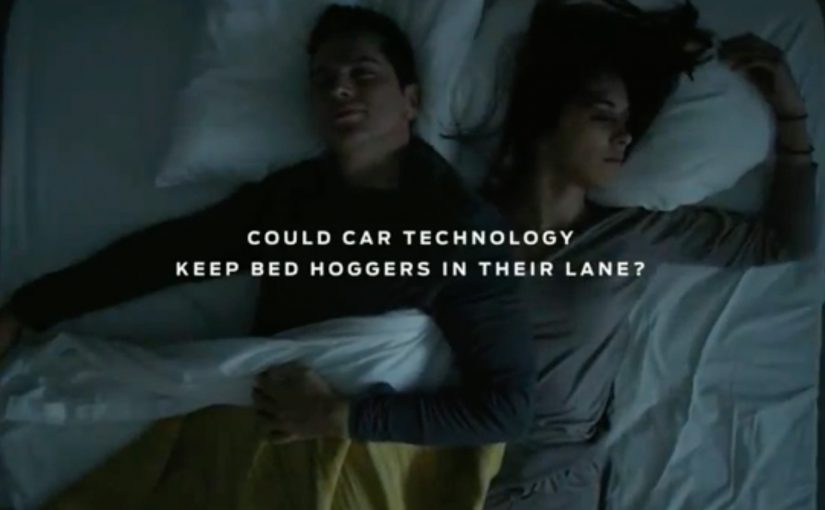

Ford Europe has unveiled a “Lane-Keeping Bed” that ensures partners always have equal amounts of sleeping space. The idea was inspired by the driver-assist technology that prevents unintentional drifting in new models like the 2019 Ford Ranger.

As demonstrated in the video below, pressure sensors detect when an active dreamer strays to the opposite side of the mattress and triggers an integrated conveyor belt that puts them back where they belong.

Like Ford’s noise-cancelling dog kennel, the Lane-Keeping Bed is only a prototype in the company’s “Interventions” series of innovations that extend beyond the car industry.

What makes this more than a gimmick

The best part of this idea is how clearly it translates a car behavior into a home behavior. Lane-keeping takes a drifting object and gently guides it back. Here, the drifting object is a person during sleep, and the “guidance” is a slow conveyor movement that restores the boundary without turning the moment into a fight. That matters because it turns a familiar assistive correction into a domestic fix people can understand in seconds.

Why it works as a brand signal

Ford’s “Interventions” framing matters. It positions the company’s tech capabilities as transferable. Sensors, assistive correction, and comfort innovations are not locked inside vehicles. They can show up wherever people experience everyday friction.

Extractable takeaway: When a product behavior is hard to explain in its native category, move it into a familiar everyday setting where the tension is obvious and the benefit can be seen instantly.

In consumer brands, the fastest way to make a technical capability stick is often to place it inside an everyday tension people already recognize.

The real question is whether a brand can make an assistive technology feel useful, human, and memorable outside its core category.

This works because Ford is not pretending to sell beds. It is using the prototype to make its driver-assist logic easier to notice, remember, and talk about.

What to borrow if you build products or campaigns

- Start from a real tension. Mattress hogs are a universal problem, and the benefit is instantly understood.

- Make the mechanism visible. Pressure sensors plus a moving belt is easy to demonstrate, so the story travels.

- Prototype to communicate capability. Even if it never ships, it can reframe what your brand is “good at”.

A few fast answers before you act

What is Ford’s Lane-Keeping Bed?

It is a prototype bed concept that uses pressure sensors and an integrated conveyor belt to move a drifting sleeper back to their side of the mattress.

What inspired the idea?

It was inspired by Ford’s driver-assist technology that helps prevent unintentional drifting in vehicles like the 2019 Ford Ranger.

How does it detect someone moving across the bed?

Pressure sensors detect when a sleeper strays to the other side, then trigger the conveyor belt response.

Is this a real product for sale?

No. It is presented as a prototype within Ford’s “Interventions” series, which explores ideas beyond the car industry.

What is the main takeaway?

Take a capability you already own. Translate it into a different everyday context where the tension is obvious and the benefit is immediate.