The pitch is familiar: “fastest network.” The execution is not. Vodafone Germany turns the claim into a street-level AR game where your city becomes the arena and “Buffer Monsters” become the enemy.

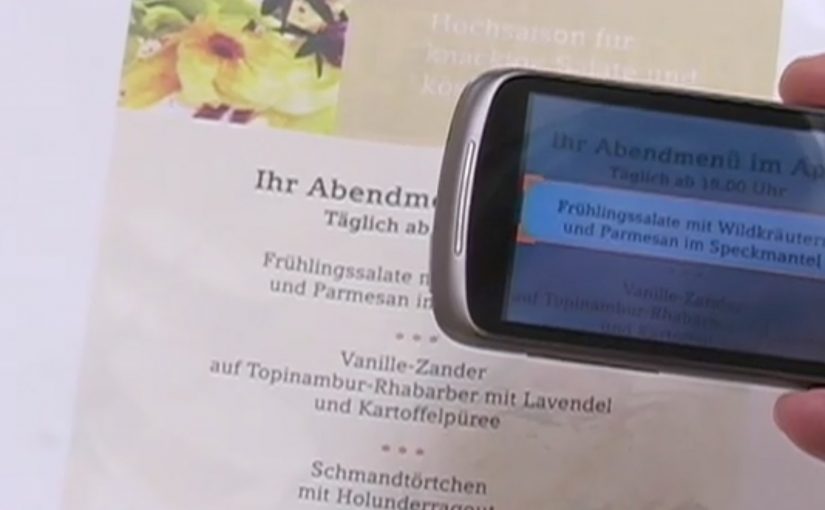

You walk around with an iPhone or Android smartphone, spot the monsters through the camera view, and capture them. Once you’ve banked 50, you take them to a nearby Vodafone store to “dump” them and keep playing. Top performers compete for a lifetime plan.

Gamified AR is a neat way to convert an abstract network promise into something people can experience with their own movement and time.

Turning buffering into a villain you can catch

The smartest move here is the metaphor. “Buffering” is a universal pain, so the campaign gives it a face, then gives you a job: remove slowness from the streets.

That story does two things at once. It makes the “fast network” positioning emotionally legible. It also creates a reason to keep playing beyond novelty, because the monsters represent a real frustration.

The mechanic: capture loop, then a store-based reset

The gameplay loop is intentionally simple:

- Discover: find monsters while moving through real locations.

- Capture: use the phone view to trap them.

- Capacity cap: collect up to 50 before you hit the limit.

- Reset in retail: visit a Vodafone store to unload the bank and continue.

The cap is not just game balance. It is the bridge to the business goal: repeat footfall into stores without making the experience feel like a coupon hunt.

In German consumer telecom marketing, a speed claim becomes believable when people can test it with their own time and movement.

The real question is whether you can turn an abstract promise into a repeatable challenge people want to complete and retell.

Why it lands: it makes speed social and competitive

This works because it turns “my network is fast” into a contest people can prove with their own time and movement. Players are not only consuming a message. They are choosing when to play, where to hunt, and how hard to push the leaderboard, which makes the brand message feel earned rather than delivered.

Extractable takeaway: When your promise is hard to verify, build a simple loop that lets people demonstrate it, then let competition and viewer control do the persuasion.

What Vodafone is really optimizing for

On the surface, it is an AR advergame, meaning a branded game built to carry a marketing message through play. Underneath, it is a store traffic engine plus a positioning reinforcer. The store visit is framed as part of the fantasy, so retail becomes a checkpoint, not an interruption.

It is also a clean way to recruit advocates. The people who do best are the ones most likely to talk about it, because the game gives them a score they can brag about.

Steal this capture loop for your next launch

- Personify the pain point so the product promise has an enemy to defeat.

- Add a capacity cap to create natural “reset moments” that map to business actions.

- Make the brand touchpoint a checkpoint, store, event, or partner location, not a forced detour.

- Design for retell, “I caught 50 monsters and had to dump them at a store” is a complete story.

The TVC supporting the initiative is also well done, and helps explain the mythology quickly for people who never touch the app.

A few fast answers before you act

What is Buffer Busters, in one line?

An AR street game from Vodafone Germany where you hunt “Buffer Monsters” with your phone, then reset your collection by unloading them at Vodafone stores.

Why does the “50 monsters” limit matter?

It creates a loop. Players hit a cap, then have a reason to visit a store to continue, which turns gameplay momentum into retail footfall.

What business problem does this solve beyond awareness?

It converts a network claim into participation, drives repeat store visits, and builds competitive motivation through leaderboards and prizes.

What makes the story-device strong here?

Buffering is a universal frustration. Turning it into a villain gives the “speed” promise a concrete, memorable meaning.

What is the biggest failure mode for AR hunts like this?

Friction. If discovery is unreliable, capture feels inconsistent, or permissions and setup are confusing, people drop before the loop becomes rewarding.