Viral video creation is shifting from a production task to an operating-model question, and Topview AI is a useful example.

For years, short-form performance video lived in two modes. Manual production that is slow and expensive. Or template-based generators that are faster, but still force you into lots of manual re-work.

Now a third mode is emerging: AI Video Agents, meaning systems that take a short brief plus a few inputs and generate a complete multi-shot draft you can iterate on.

The shift is simple. Instead of editing frame-by-frame, you brief the outcome. Optionally provide a reference viral video. The agent then recreates the concept, pacing, and structure for your product in minutes. Your job becomes direction, constraints, and iteration. Not timelines.

Meet the AI Video Agent “three inputs” workflow

Topview’s core promise is “clone what works” for short-form marketing.

Upload your product image and/or URL so the system extracts what it needs. Share a reference viral video so it learns the shots and pacing. Get a complete multi-shot video that matches the reference style, rebuilt for your product.

That is the operational unlock. You stop asking a team to invent from scratch every time. You start generating variants of formats that already perform, then iterate based on outcomes.

In enterprise teams, that makes this less a content toy and more a new layer in the performance-creative operating model, where briefing quality, asset governance, and measurement discipline matter more than raw production capacity.

That changes what teams need to get right. Faster generation only creates value when the workflow improves how quickly the team learns what to scale.

What “cloning winning ads” really means

This is not about copying someone’s assets. It is about cloning a repeatable pattern.

Extractable takeaway: When a workflow can reliably regenerate a proven creative structure, the bottleneck shifts from making assets to choosing angles, proof, and guardrails that improve one test at a time.

High-performing short-form ads tend to share the same backbone. A strong opening. A clear value moment. Proof. A simple call-to-action. The variable is the angle and execution. Not the structure.

AI video agents are optimized to reproduce that backbone at speed, then let you steer the angle. Because the agent reuses a proven structure, you can spend your time on angles and proof, which increases iteration velocity. That is why they matter for performance teams. The advantage is iteration velocity. The risk is sameness if you do not bring differentiation in offer, proof, and brand voice.

What to evaluate beyond the AI Video Agent headline

I would not judge any platform by a single review video. I would judge it by whether it covers the tasks that constantly slow teams down.

From the “creative tools” surface, Topview positions a broader toolbox around the agent, including: AI Avatar and Product Avatar workflows, plus “Design my Avatar”. LipSync. Text-to-Image and AI Image Edit. Product Photography. Face Swap and character swap workflows. Image-to-Video and Text-to-Video. AI Video Edit.

This matters because real creative operations are never “one tool.” They are a chain. The more of that chain you can keep inside one workflow, the faster your test-and-learn loop becomes.

The practical question is whether that workflow plugs cleanly into your brand-asset flow, approval model, paid-social activation, and testing cadence without creating new review debt.

Topview alternatives. Choose by workflow role, not by hype.

If you are building an enterprise creative stack, choose these tools by workflow role, asset control, and measurement fit, not by demo quality.

HeyGen

HeyGen positions itself around highly realistic avatars, voice cloning, and strong lip-syncing, plus broad language support and AI video translation. It also supports uploading brand elements to keep outputs consistent across projects. Compared to Topview’s short-form ad focus and beginner-friendly “quick publish” style workflow, HeyGen is often the stronger fit when avatar-led and multilingual presenter content is your primary format.

Synthesia

Synthesia is typically strongest for presenter-led videos, especially training, internal communications, and more corporate-grade marketing explainers. Compared to Topview’s short product ad focus, Synthesia is often the cleaner fit when a human-style presenter is the core format.

Fliki

Fliki stands out when your workflow starts from existing assets and needs scale. Blogs, slides, product inputs, and team updates converted into videos with avatars and voiceovers, plus a large set of voice and translation options. Use Fliki when you want breadth and flexibility in avatar and voiceover production. Otherwise, use Topview AI when your priority is easily creating short videos from links, images, or footage with minimal workflow friction.

Operating moves for AI video agents

The real question is whether your team can turn minutes-long production into a disciplined iteration system without losing distinctiveness.

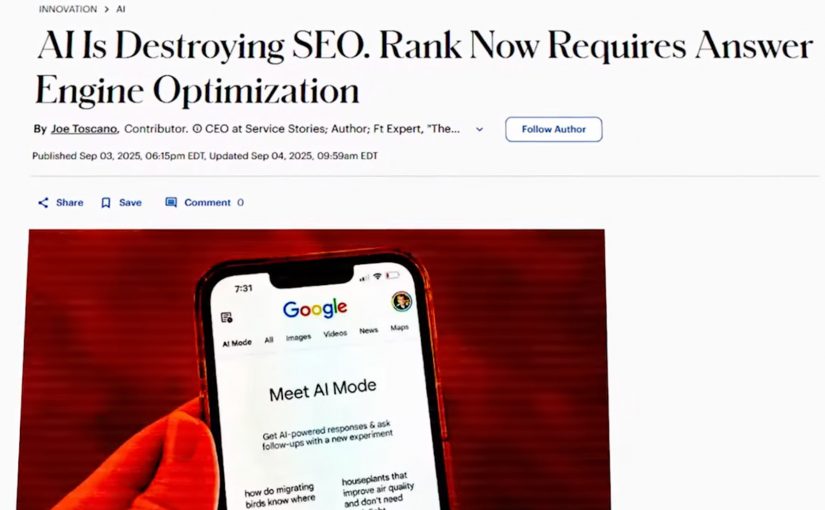

My take is that viral content is no longer mainly a production problem. It is an operating-model problem, because speed only compounds value when briefs, proof, guardrails, and learning loops are already in place.

- Brief for outcomes, not assets. Define the hook, value moment, proof, and CTA before you generate variants.

- Constrain sameness early. Put brand voice, offer boundaries, and “do not do” rules into the brief so speed does not turn into remix culture.

- Run a ruthless learning loop. Test fewer, better variants. Kill quickly. Scale only what proves incremental lift.

Which viral video would you recreate first. And what would you change so it is unmistakably yours, not just a remix.

A few fast answers before you act

What does “clone winning ads” actually mean?

It usually means generating new variants that reuse the structure of high-performing creatives. The goal is to speed up iteration, not to copy a single ad one-to-one.

Is this ethical?

It depends on what is being “cloned.” Reusing your own learnings is normal. Copying another brand’s distinctive IP, characters, or protected assets crosses a line. Governance and review matter.

What will still differentiate brands if everyone can produce fast?

Strategy, customer insight, and taste. If production becomes cheap, the competitive edge moves to positioning clarity, creative direction, and the quality of testing and learning loops.

How should teams use this without flooding channels with slop?

Use strict briefs, clear brand guardrails, and a limited hypothesis set. Test fewer, better variants. Kill quickly. Scale only what proves incremental lift.

What is the biggest risk?

Over-optimizing for short-term clicks at the expense of brand meaning, trust, and distinctiveness. High-volume iteration can become noise if the work stops saying something specific.